{% include_example scala/org/apache/spark/examples/ml/EstimatorTransformerParamExample.scala %}

{% include_example java/org/apache/spark/examples/ml/JavaEstimatorTransformerParamExample.java %}

{% include_example python/ml/estimator_transformer_param_example.py %}

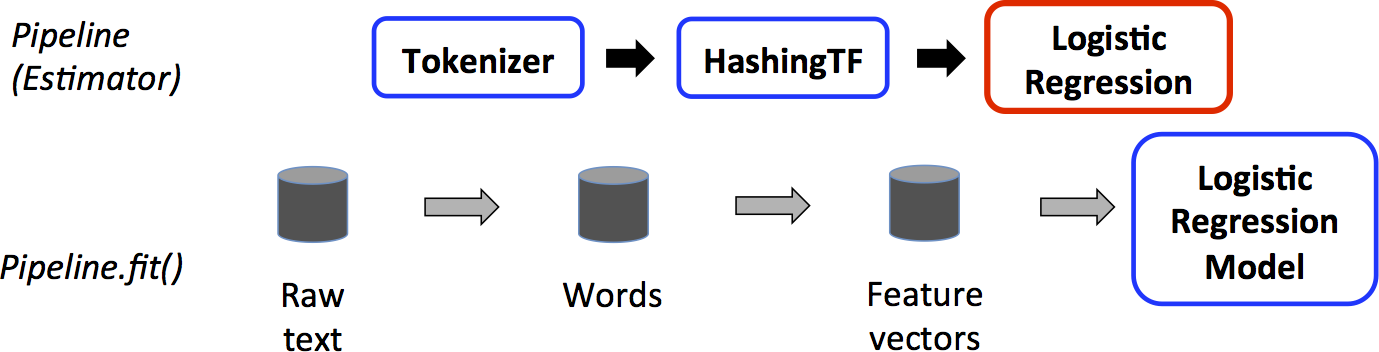

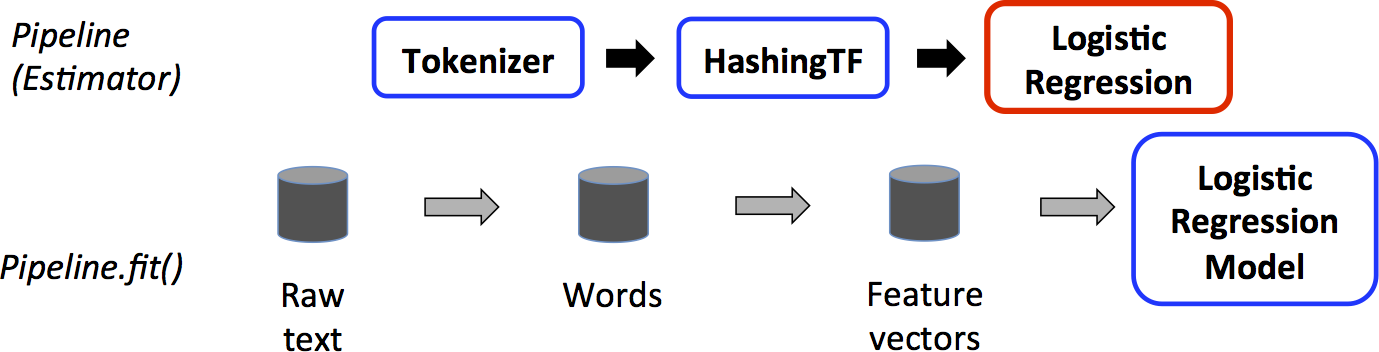

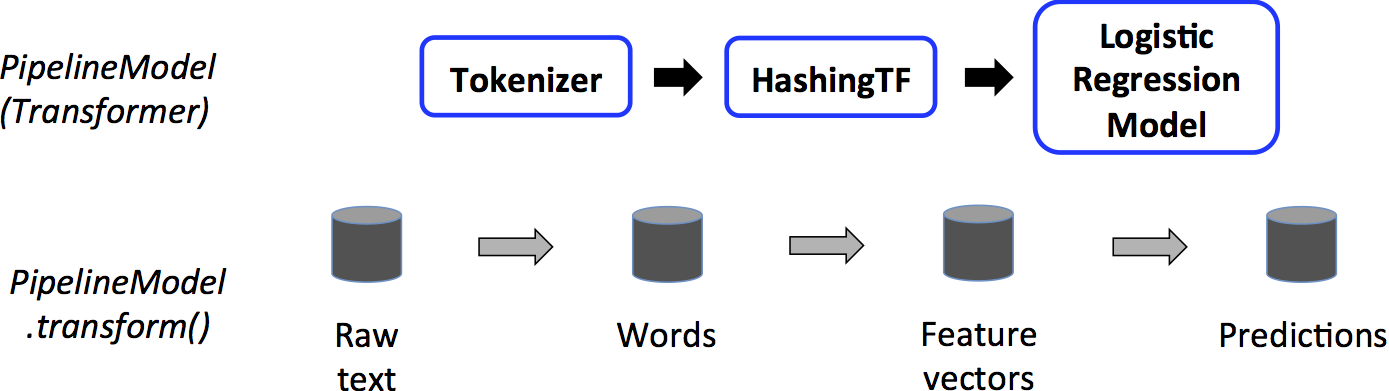

## Example: Pipeline

This example follows the simple text document `Pipeline` illustrated in the figures above.

{% include_example scala/org/apache/spark/examples/ml/PipelineExample.scala %}

{% include_example java/org/apache/spark/examples/ml/JavaPipelineExample.java %}

{% include_example python/ml/pipeline_example.py %}

## Model selection (hyperparameter tuning)

A big benefit of using ML Pipelines is hyperparameter optimization. See the [ML Tuning Guide](ml-tuning.html) for more information on automatic model selection.